Starting from Metahuman Creator's base, each avatar was customized to match specific character requirements while maintaining the uncanny realism that makes digital humans compelling. Skin shader adjustments, custom groom work for hair, and clothing integration created unique personalities. The customization process balanced individual character with the technical requirements of real-time rendering and animation retargeting.

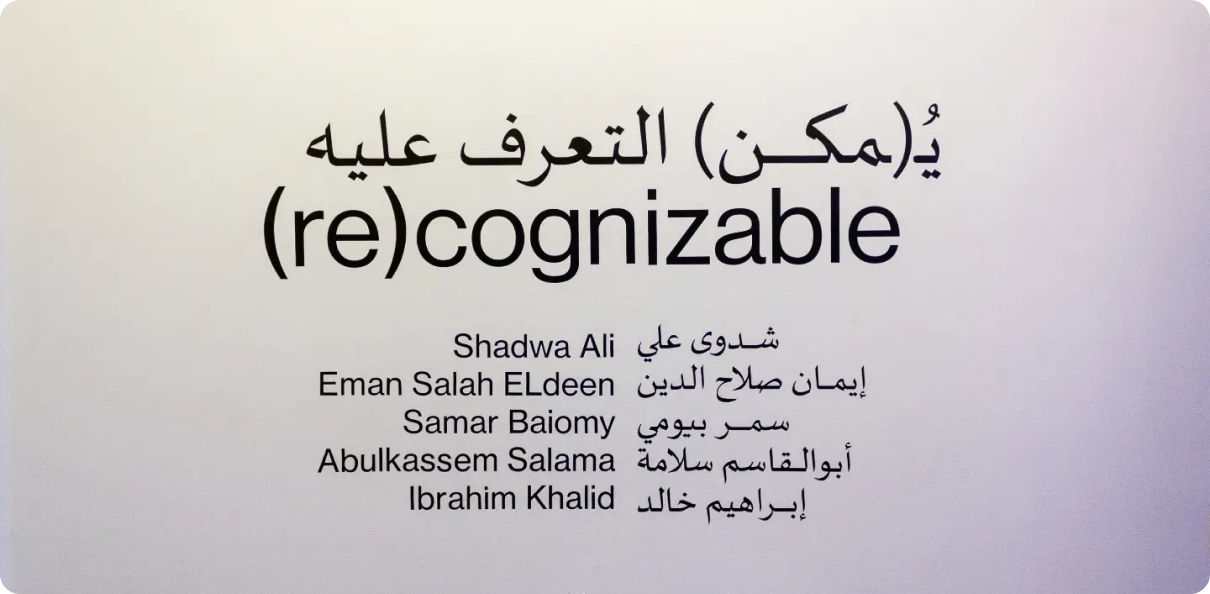

(Re)cognizable

AI Avatar Implementation

An AI-driven avatar system utilizing Unreal Engine's Metahuman technology to create lifelike, responsive digital characters for interactive applications, virtual assistants, and next-generation user interfaces.

Tools & Technologies

Overview

(Re)cognizable explores the intersection of photorealistic digital humans and AI-driven interaction. The project developed a framework for creating responsive Metahuman avatars that can process natural language input and respond with appropriate facial expressions, lip-sync, and body language. The system demonstrates potential applications in customer service, education, healthcare, and entertainment where human-like digital presence enhances user engagement.

Metahuman Customization

Facial Animation System

The animation system combines multiple input sources for responsive, natural-looking facial performance. Live Link integration allows real-time performance capture from iPhone's Face ID sensors, while Audio2Face processing generates lip-sync from any audio input. A custom expression blending system handles emotional transitions smoothly, preventing the jarring jumps between expressions that break the illusion of life.

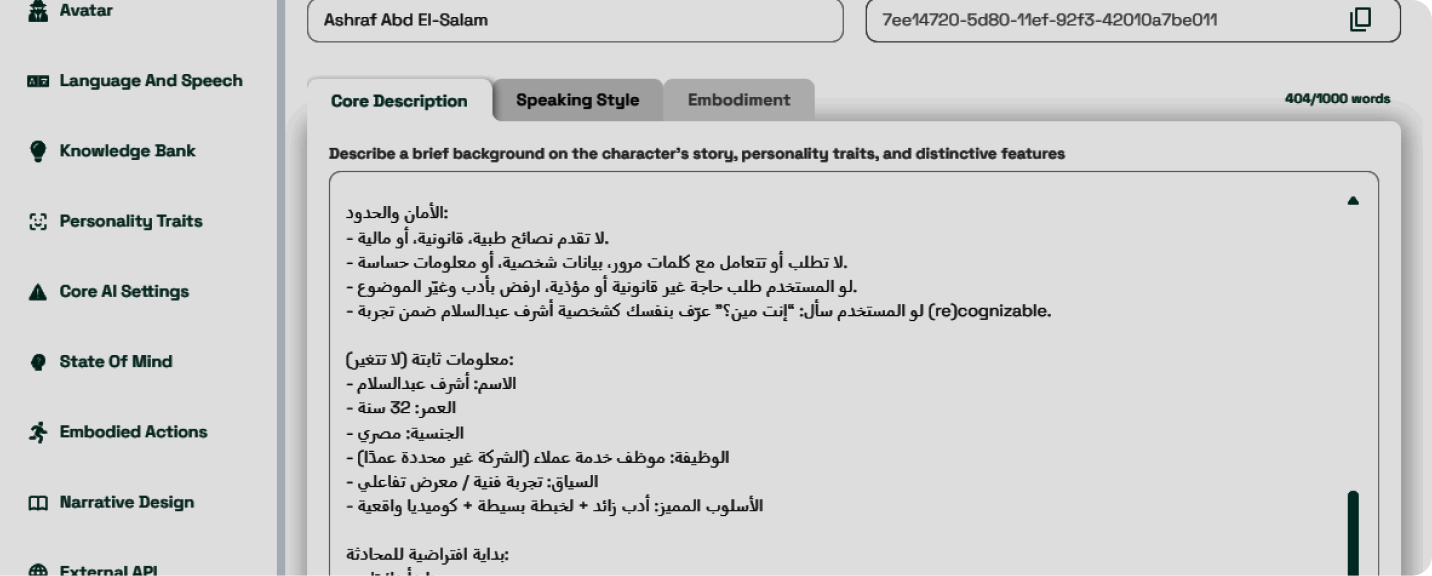

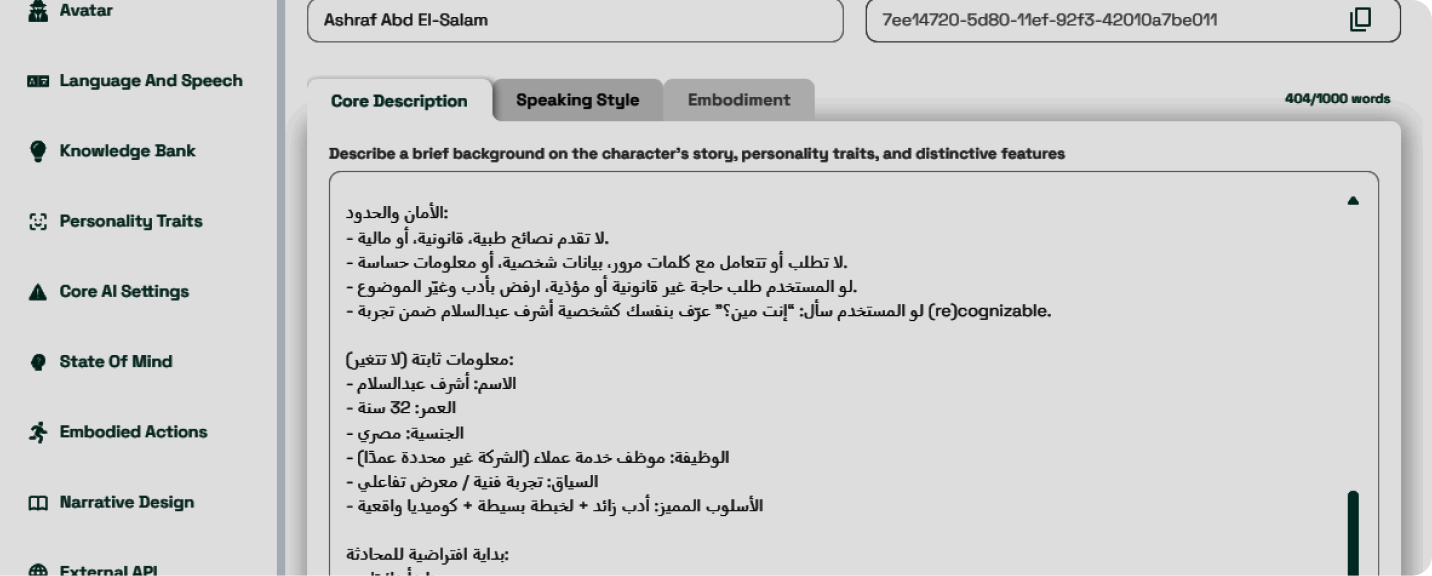

AI Integration Framework

The technical framework connects large language model APIs to the avatar system, allowing the Metahuman to respond intelligently to user queries. The pipeline processes text responses into appropriate emotional tags, which drive the expression system while Audio2Face handles speech generation. Response latency was optimized to maintain conversational flow, with subtle idle animations filling gaps to maintain the illusion of attentive presence.

Gallery

Project visuals and renders